The model of your choice doesn't fit in SRAM. You consider reducing its size or using a different model. TiGrIS tiles the computation instead and makes the exact model run on your target hardware.

If you’ve deployed ML on an embedded device, you’ve probably faced this issue. But you need to get this to work, so you quantize to int8, you prune, you try a smaller architecture. Eventually TFLite Micro’s AllocateTensors() returns kTfLiteOk and you move on… with a model that’s worse than what you trained and intended to deploy.

TiGrIS takes a completely different approach: instead of shrinking or manipulating the model, it tiles the computation to fit the memory budget you actually have.

What TiGrIS is

TiGrIS (Tiled Graph Inference Scheduler) is an ahead-of-time compiler and minimal C99 runtime for deploying ML models on resource-constrained embedded hardware. It is neither an interpreter, nor a framework. It’s a combination of a compiler that emits a binary execution plan and a runtime that follows it. No malloc, no graph traversal, no surprises at inference time.

The design separates concerns cleanly: the compiler runs on your workstation where compute and memory are cheap, the runtime runs on the embedded device where every byte matters. Graph optimization, memory planning, and tiling decisions all happen at compile time.

Components

TiGrIS has three components that form a pipeline from trained model to inference:

The toolchain (tigris)

A Python CLI that ingests a model, analyzes its memory profile, compiles it into an execution plan, and optionally generates backend-specific C source. Currently imports from ONNX, but the compiler operates on an internal graph intermediate representation (IR). The ingestion frontend is a thin layer, making it straightforward to add importers for other formats (TFLite, PyTorch ExportedProgram, etc.) as needed.

tigris analyze model.onnx -m 256K -f 4M

tigris compile model.onnx -m 128K -f 4M --xip -o model.tgrs

tigris codegen model.tgrs --backend esp-nn -o model.c-m sets the SRAM budget the compiler must respect. -f sets the flash budget for validation. --xip (execute in place) tells the compiler that model weights will be read directly from flash at runtime via memory-mapped I/O instead of copying them into RAM. This is the normal mode for embedded deployment, where weights are too large for SRAM but sit comfortably in flash.

codegen takes a compiled .tgrs plan and emits a standalone C file that runs inference. --backend selects which kernel implementations to use: reference (portable C), esp-nn (Espressif’s SIMD-optimized kernels for ESP32), or cmsis-nn (Arm’s optimized kernels). The same plan for different kernels, so you can pick the backend that matches your target hardware.

The execution plan (.tgrs)

The compiler’s output is a binary artifact containing everything the runtime needs:

- Every operator call, in execution order

- Every tensor’s size, shape, quantization parameters, and memory pool assignment (SRAM, PSRAM, or flash)

- Per-channel multipliers and shifts (no float math on device)

- LZ4-compressed weight blocks (decompressed on the fly from flash)

There’s no flatbuffer parsing, no protobuf, no interpreter dispatch loop. Just a flat array of op structs the runtime walks sequentially.

The runtime (tigris-runtime)

A C99 library (~8KB of code) that reads a .tgrs plan and executes it. With zero heap allocation: it takes a pre-allocated SRAM arena and a pointer to the plan in flash, and runs inference. The runtime itself is model-agnostic; all model-specific information lives in the plan.

Pluggable kernel backends

The binary plan doesn’t contain any executable code. It solely describes what to compute, not how. At build time, you pick a kernel backend that provides the actual operator implementations:

| Backend | Target | Notes |

|---|---|---|

reference | Any C99 | Portable, ~600ms on ESP32-S3 |

esp-nn | ESP32 family | Espressif’s optimized kernels (SIMD on S3/P4), ~30ms on S3 |

cmsis-nn | Cortex-M family | Arm’s optimized kernels (DSP on M4/M7, Helium on M55) |

Same model, same plan, different #include. The backend interface is a single struct of function pointers, so adding a new backend means filling in that struct with your kernel implementations. Want to target a new chip with vendor-optimized kernels? Write a thin backend, plug it in, and you’re ready to go.

Memory hierarchy

Most embedded devices don’t have just one kind of memory. They have a hierarchy with wildly different speeds and sizes. TiGrIS can be made aware of this hierarchy and therefore plan for it at compile time.

SRAM is the fast, scarce resource. On a typical Cortex-M or ESP32, you get 256–512KB. This is where activations and scratch buffers live during inference. The -m flag sets this budget, and the compiler guarantees it won’t be exceeded.

PSRAM (or any slower external RAM) is required for multi-stage models, meaning any model whose activations exceed the SRAM budget. An ESP32-S3 ships with 2-16 MB depending on variant. It’s 5-10x slower than SRAM, but much larger. Intermediate tensors spill from SRAM to PSRAM between stages, and the compiler schedules cold tensors there when they aren’t on the hot path. You specify it as a second -m flag: -m 256K -m 4M means 256KB fast + 4MB slow. The compiler decides what goes where. Tensors accessed frequently or by SIMD kernels stay in SRAM, while larger tensors that can tolerate the latency get placed in PSRAM. Models that fit in a single stage can run without PSRAM.

Flash stores model weights. With --xip weights are read directly from flash via memory-mapped I/O at inference time and are never copied into RAM. This is critical because weights are often the largest part of the model. A DS-CNN’s weights are ~80KB, but a MobileNetV2 has several megabytes. Without XIP, those weights would eat your entire SRAM budget before activations even enter the picture. The -f flag sets the flash budget so the compiler can validate that the model actually fits on your target’s storage.

The compiler’s memory planner sees all three tiers. When it runs USMP (Unified Static Memory Planning), it packs tensor lifetimes into the SRAM arena first, spills to PSRAM when necessary, and maps weights to flash. The resulting plan bakes in every address and every transfer. The runtime doesn’t make placement decisions.

How tiling works

TiGrIS uses three techniques, applied in order, to fit a model into a given SRAM budget.

Temporal partitioning is the first step. The compiler walks the operator graph and groups consecutive ops into stages such that each stage’s live tensors fit in the budget. Between stages, intermediate tensors are spilled to slow memory (PSRAM) and reloaded when needed. This is analogous to register spilling in a traditional compiler, but for tensor memory. If the model already fits in a single stage, no partitioning is needed.

Spatial tiling handles stages where even a single operator’s input and output tensors exceed the budget. A convolutional layer doesn’t need the entire input tensor at once. It reads a small spatial window per output pixel. If you process output rows in strips, say 24 rows at a time instead of all 96, you only need to hold one strip of input and one strip of output in SRAM simultaneously. Peak memory drops proportionally. The compiler determines how many strips are needed, how many halo rows to overlap for convolution padding, and where to spill intermediates.

This is a well-known technique. MCUNet published the idea in 2021 and showed results on models found by neural architecture search. TiGrIS applies it to arbitrary models.

Chain tiling is an optimization on top of spatial tiling. When consecutive stages are all spatially tileable (e.g. Conv followed by DW Conv followed by PW Conv), the compiler can fuse them into a single streaming pass. The intermediate tensors between fused ops never fully materialize in the arena. This significantly reduces both memory and the number of PSRAM round-trips.

Benchmarks

We compared TiGrIS against TFLite Micro on the same hardware with two models: DS-CNN for keyword spotting (from MLPerf Tiny) and MobileNetV1 for image classification, both int8 quantized, on an ESP32-S3 dev board.

The benchmark suite, raw logs, and tooling are available at tigris-bench. All results were collected on remote hardware via SiliconRig with ESP-IDF v5.4 and esp-tflite-micro ~1.3.1.

Hardware

Board: ESP32-S3-DevKitC-1 (N16R8)

| Resource | Spec |

|---|---|

| CPU | Dual-core Xtensa LX7 @ 240 MHz |

| SRAM | 512 KB (384 KB usable) |

| PSRAM | 8 MB octal SPI (80 MHz) |

| Flash | 16 MB quad SPI |

Both frameworks run single-threaded on CPU0 at 240 MHz. PSRAM serves as slow memory for tiled intermediate spills. Flash stores model weights (TiGrIS via XIP, TFLM via compiled-in C arrays).

Methodology

- Warmup: 3 inference runs discarded

- Measurement: 10 timed runs,

esp_timer_get_time()(microsecond resolution) - Input: deterministic (all-1 for int8)

- Watchdogs: disabled to prevent timing interference

- Reported: mean latency across 10 runs

Both runtimes use ESP-NN SIMD kernels for int8, which isolates the framework overhead: same kernels, same hardware, different scheduling.

Case A: DS-CNN keyword spotting

DS-CNN is a depthwise-separable CNN that classifies 1-second audio clips into 12 keyword classes. About 26K parameters, small enough to fit entirely in SRAM.

This is the “model fits” baseline. Tiling is not needed here. The question is simply: how much overhead does TiGrIS’s scheduling add when the model already fits?

| Configuration | dtype | Kernel | Latency (ms) | SRAM (KB) | Flash (KB) |

|---|---|---|---|---|---|

| TiGrIS f32 ref | f32 | reference | 539.67 | 256 | 89 |

| TFLM f32 | f32 | default | 456.43 | 256 | 91 |

| TiGrIS i8 ref | int8 | reference | 599.66 | 256 | 26 |

| TiGrIS i8 ESP-NN | int8 | ESP-NN | 29.45 | 256 | 26 |

| TFLM i8 | int8 | ESP-NN | 30.49 | 256 | 41 |

The production-relevant comparison is the last two rows. Both use the same ESP-NN SIMD kernels. The 1.04 ms difference (~3%) is framework overhead: flat struct dispatch vs. flatbuffer interpretation. Negligible in practice.

On flash usage, the TiGrIS plan is 26 KB vs TFLM’s 41 KB (37% smaller) because weights are stored as raw int8 without flatbuffer wrapping.

When the model fits in SRAM, the two frameworks are effectively equivalent.

Case B: MobileNetV1 image classification

MobileNetV1 (standard 1.0x, 13 depthwise-separable blocks) at a 128x128 input has about 3.2M parameters. Peak activation memory is around 232 KB, which pushes the limits of what fits in the ESP32-S3’s usable SRAM.

The ESP32-S3 has 512 KB of SRAM on paper, of which roughly 384 KB is usable. After firmware overhead (BSS, stack, IDF internals), the largest tensor arena that can be statically allocated is about 274 KB. We tested TFLM at both 256 KB and 270 KB. AllocateTensors() fails at both sizes for this model. The gap is not a budget choice; it is a hard ceiling.

| Configuration | SRAM budget | Latency (ms) | Overhead | Stages | Tiling strategy |

|---|---|---|---|---|---|

| TiGrIS @256K (baseline) | 256 KB | 1208.46 | 1 | no tiling needed | |

| TiGrIS @128K | 128 KB | 1218.36 | +0.8% | 3 | 2 chain-tiled stages |

| TiGrIS @64K | 64 KB | 1448.38 | +19.8% | 9 | 8 spatially-tiled stages |

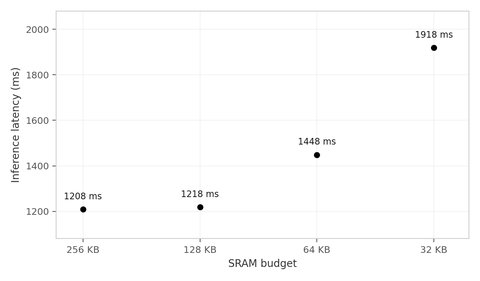

| TiGrIS @32K | 32 KB | 1918.38 | +58.7% | 13 | 12 spatially-tiled stages |

| TFLM @270K | 270 KB | arena too small | |||

| TFLM @256K | 256 KB | arena too small |

All TiGrIS configurations use the ESP-NN SIMD backend. TFLM could not allocate tensors for this model at any arena size the hardware can provide (AllocateTensors() returns kTfLiteArenaRequired at both 256 KB and 270 KB; 274 KB is the static-allocation ceiling). Any runtime that allocates all tensors upfront would hit the same wall.

TiGrIS produces correct output at every budget level, down to 32 KB (8x below the model’s peak). The compiler partitions the model into stages that fit the budget and spills intermediate tensors to PSRAM between stages.

The overhead curve is sublinear. Halving the budget does not double the latency:

256K → 128K: -50% memory, +0.8% latency (chain tiling)

128K → 64K: -50% memory, +18.9% latency (spatial tiling kicks in)

64K → 32K: -50% memory, +32.4% latency (more tile passes)The 256K to 128K reduction is nearly free. The compiler uses chain tiling to fuse consecutive operators (Conv + DW Conv + PW Conv) into a single streaming pass. The intermediate tensors between fused ops never fully materialize in the arena, so memory drops with almost no latency cost.

Below 128K, the compiler switches to spatial tiling, processing the feature map in horizontal strips. Each strip requires reading overlapping halo rows from PSRAM for convolution padding, so the cost grows with the number of passes. At 32K the overhead is 59%, which is still usable for many applications (periodic classification, anomaly detection, wake word).

What the stages mean

- @256K: 1 normal stage, the whole model runs flat (actual peak 232 KB)

- @128K: 1 normal + 2 chain-tiled, early layers fused, late layers flat

- @64K: 1 normal + 8 spatially-tiled, most layers processed in multi-pass strips

- @32K: 1 normal + 12 spatially-tiled, nearly every layer is tiled

Observations

The kernel backend dominates latency. The 18x speedup from reference C to ESP-NN SIMD is far larger than any framework overhead. If you are optimizing for latency on a specific target, the kernel backend matters more than the scheduling layer.

Memory planning is what differentiates the frameworks. When the model fits, both runtimes perform within 3% of each other. When it doesn’t fit, tiling turns an impossible deployment into a latency-memory trade-off.

Chain tiling is nearly free. The 256K to 128K reduction costs under 1% latency because the compiler fuses depthwise-separable blocks into streaming chains. The intermediate tensors never fully hit the arena. This is where tiling pays for itself.

The overhead curve bends, it doesn’t explode. Each halving of the budget adds less proportional overhead than the previous one because the compiler adapts its strategy: chain fusion first, then spatial tiling with progressively finer strips.

What’s next

- Tiling support for more operator classes. Currently, ops that collapse or reshape spatial dimensions (Flatten, Reshape, Gemm, Softmax, ReduceMean) are untileable and force the compiler to keep their full tensors in SRAM. Extending tiling to cover these cases would allow tighter budgets on models with large fully-connected layers.

- PSRAM-aware spill scheduling. Currently all inter-stage spills go to PSRAM uniformly. Smarter placement based on tensor access patterns and reuse distance could reduce the number of slow-memory round-trips, especially at small budgets.

- Tiling along non-height axes. The current spatial tiling always splits along the height dimension. For operators with wide but shallow feature maps, width-axis or channel-axis tiling could be more effective.

Try it

TiGrIS is open source under Apache 2.0.

$ pip install tigris-ml

$ tigris analyze mobilenetv2.onnx -m 256K -f 16M

╭──────────────────────── TiGrIS - mobilenetv2 ────────────────────────╮

│ Operators 65 │

│ Tensors 248 (66 activations) │

│ Peak memory (naive) 4.59 MiB │

│ Largest tensor 1x96x112x112 (4.59 MiB) │

│ Dtype float32 │

╰──────────────────────────────────────────────────────────────────────╯

╭──────────────────────────────── SRAM ────────────────────────────────╮

│ Budget 256.00 KiB │

│ Scheduled peak 254.62 KiB (5.4% of naive peak) │

│ Stages 42 │

│ Spill / reload I/O 24.56 MiB / 25.59 MiB │

│ │

│ Need tiling 31 of 42 stages │

│ tileable 6 (54 tiles, max halo 2) │

╰──────────────── PASS - tiling resolves all stages ─────────────────╯

╭──────────────────────────────── Flash ───────────────────────────────╮

│ Budget 16.00 MiB │

│ Weight data 13.31 MiB │

│ Plan overhead 0.01 MiB │

│ Plan (est.) 13.32 MiB │

│ Plan INT8 (est.) 3.34 MiB │

╰─────────────────────── PASS - plan fits ───────────────────────────╯The model’s naive peak is 4.59 MiB, but TiGrIS schedules it into 256 KiB of SRAM through temporal partitioning and spatial tiling. No hardware required to run analyze.

GitHub: github.com/raws-labs/tigris